For Google News

What Marketers Should Know About Artificial Empathy

By Nicola Brown on May 16, 2018

Need a little pick-me-up? Remember the adorable moment when WALL-E first meets EVE?

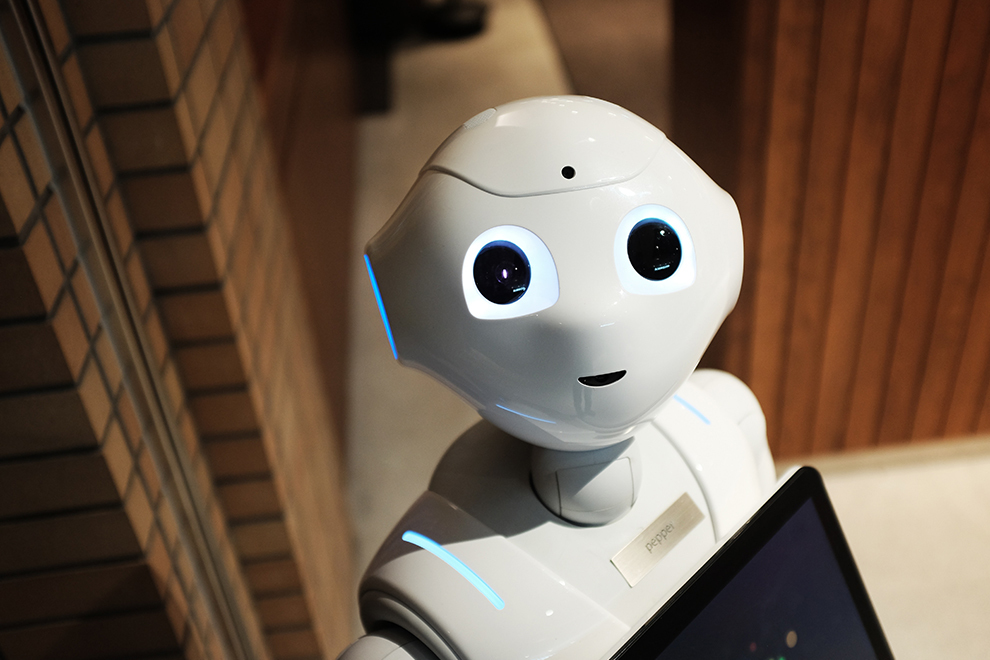

Sci-fi has explored the concept of robots with feelings extensively. Her, WALL-E, and Westworld are a few of my favorites. But the seemingly distant technological capability for machines with emotional dimensions may not be as distant as we think. In fact, many research labs and companies already run software that's designed to understand and respond to what you're feeling, not just what you're saying. These applications of artificial empathy are wide-ranging, from market research to transportation to marketing and advertising.

What Is Artificial Empathy?

Artificial empathy isn't quite robots with feelings, but its goal is to detect and respond to human emotions. It is distinct from, but related to, artificial intelligence: You can think of artificial intelligence as the umbrella tech that encompasses many different branches of development, including artificial empathy.

Many people at the forefront of artificial empathy believe it's a crucial step in the evolution of artificial intelligence, not just for creating a fully intelligent robot, but also for people to have greater acceptance of such robots.

But even without the physical form most of us associate with robots, artificial empathy is being woven into everyday life already, like automotive systems in your car and apps on your phone.

Image attribution: Alex Knight

Humanizing Interactions Between People and Technology

We often take for granted the ability to recognize other people's emotions. It's a mostly intuitive capability that we don't think too hard about. The science of empathy reveals that this complex capability ranges from simple gesture mimicry to cognitive perspective-taking. But for computers to recognize emotions is actually really hard work. Our brains use multiple inputs to detect others' emotions, like non-verbal facial cues (expressions) and tones of voice. Software systems that are able to detect feelings must break down the individual components that we use ourselves to do so.

Boston-based startup Affectiva uses facial recognition and voice recognition software to identify emotions and cognitive states, pioneering the integration of artificial empathy into our daily lives. They call their software artificial emotional intelligence or Emotion AI.

For its facial recognition, Affectiva relies on optical sensors like your smartphone camera or webcam. Algorithms first identify your face, then home in on target areas like the corners of the mouth, the eyebrows, and the tip of the nose. Different patterns in these areas are mapped to different facial expressions, and then combinations of these patterns are mapped to different emotions. They currently measure seven different emotions: anger, contempt, disgust, fear, joy, sadness, and surprise.

For its speech recognition, the company analyzes speech events, which are elements like tone, tempo, loudness, and voice quality. They're interested in analyzing not what we say but how we say it.

They use a data repository of over 6.5 million faces in 87 countries, and their software gets stronger and more accurate the more data they analyze.

Applications of Artificial Empathy

The potential for artificial empathy is broad. Consider customer service and health care, for example. We could help prevent emotional burnout in nurses by using artificial empathy to assist in patient care. We could support our customer service teams by using the software to better direct and escalate customer inquiries.

Or how about education? We could use artificial empathy to personalize educational experiences and offer more tailored solutions for those who need different approaches from the norm. We could use it for social training to help those with autism better handle the daily challenges they face.

Artificial empathy is a powerful market research tool that can help capture sentiment that's not consciously expressed by our target demographics. You may mark "somewhat agree" in a survey, but if you gave that same feedback with an annoyed tone in your voice, you actually mean something quite different. With artificial empathy, we can begin to get the benefits of in-person focus groups with less cumbersome overhead and greater geographic and cultural reach.

When it comes to marketing and advertising, we could analyze real-time impressions from our campaigns, apps, and products. We could even use these impressions to build responsive and interactive software and experiences. Imagine being able to deliver what a customer needs beyond what they're able to consciously articulate themselves.

Affectiva's CMO Gabi Zijderveld sheds some light on just how prevalent their artificial empathy technology already is:

"Brands, advertisers, and market researchers use Affectiva's technology to gain a deeper understanding of consumers' emotional engagement with their digital content, such as videos, ads, and TV programming. Currently, one-third of Fortune Global 100 companies and over 1,400 brands use Affectiva's Emotion AI technology to better understand audience reactions to content, and optimize campaigns and media spend accordingly.

"One example is Mars, Inc., which wanted to assess consumer responses to their advertising, and if these responses could predict sales. Using Affectiva's Emotion AI for facial expressions of emotion, Mars and Affectiva studied the correlation between facial reactions and emotional responses to sales effectiveness for products such as chocolate, gum, pet care, and instant foods. Ultimately, Mars found that Affectiva's emotion analytics was able to more accurately predict short-term sales than relying solely on self-report methods."

Although the capabilities of current artificial empathy machines are impressive, they still have a long way to go. One of the most challenging hurdles in this domain is known as other-self discrimination. We are very good at understanding how another person is feeling while distinguishing that from how we are feeling. This is what researchers call cognitive empathy, and it's not so easy for current AIs. This is the nuanced, higher-level behavior that prevents us from laughing at something we may find amusing in an otherwise somber situation.

Image attribution: David Yanutama

Why Artificial Empathy Is Important

Zijderveld believes that artificial empathy can help take our interactions with computers beyond the surface level. Computers have plenty of smart "cognitive" abilities but lack emotional and social dimensions. "This is a problem businesses need to face," she says. "It renders products and experiences to be ineffective and superficial, which does little to build brand loyalty or meaningful interactions between consumers and brands."

In others words, if we're going to increasingly incorporate technology into our marketing and customer relationship efforts, we need to make sure this doesn't result in amputating the human connections we build. Artificial empathy can help businesses create more meaningful and effective interactions.

It's also crucial for a deeper understanding of consumer behavior and obtaining unfiltered emotional reactions, which isn't always easy, especially over large geographic regions and numbers of people that make intimate, in-person interactions challenging. Even when we do conduct in-person research, self-reported feelings, which are intentionally filtered, often differ from actual feelings.

Artificial empathy may sound like sci-fi, but it's already becoming part of many digitally-mediated interactions. Marketers are increasingly looking to build AI capabilities to uncover hidden insights and deliver better customer experiences at scale. Artificial empathy looks to be its next frontier.

For more stories like this, subscribe to the Content Standard newsletter.

Featured image attribution: Andy Kelly