Creative Thinking

Do Readability Scores Matter?

By Nicola Brown on April 2, 2018

Let me ask you a question: Has anyone really been far even as decided to use even go want to do look more like?

Don't worry, you're not having a stroke. If you know your memes, you'll know that this is probably the most famously confounding sentence on the Internet. The question first surfaced in 2009 on a videogame forum and has been meme-ified ever since. Nobody has been able to solve the mystery of its meaning definitively, though there are probably as many theories as speculators.

The Internet has spent years struggling with the lack of readability in this sentence.

Unless you are aiming for this kind of one-in-a-million meme-level word salad, readability level is an important consideration for your content.

What Is Readability?

There are plenty of tools out there to measure readability. Maybe you're already using one or have one integrated into your content management system. A quick search of "readability scores" on Google returns myriad tools for assessing the readability of your content. So what is readability, exactly?

At the risk of stating the obvious, readability is the ease with which a reader can understand a written text.

In their seminal 1949 article, Edgar Dale and Jeanne Chall put forward a more comprehensive definition of readability: "The sum total (including all the interactions) of all those elements within a given piece of printed material that affect the success a group of readers have with it. The success is the extent to which they understand it, read it at an optimal speed, and find it interesting."

Since then, a large and very complicated field of readability research and scoring methods has emerged. According to William H. DuBay in his book The Principles of Readability, by the 1980s there were already 200 formulas and over a thousand studies published on these readability formulas.

Some of the most popular formulas today are the Flesh-Kincaid formula, the Dale-Chall formula, the Gunning Fog Index, the SMOG formula, and the FORCAST formula, to name a few. Each of these takes into account different variables such as the number of words, number of sentences, number of syllables, number of "difficult" or "hard" words, and number of words with more than two syllables.

It quickly becomes obvious that there are many ways to skin this cat. It turns out it's quite difficult to reduce the complexity of language down to a mathematical formula.

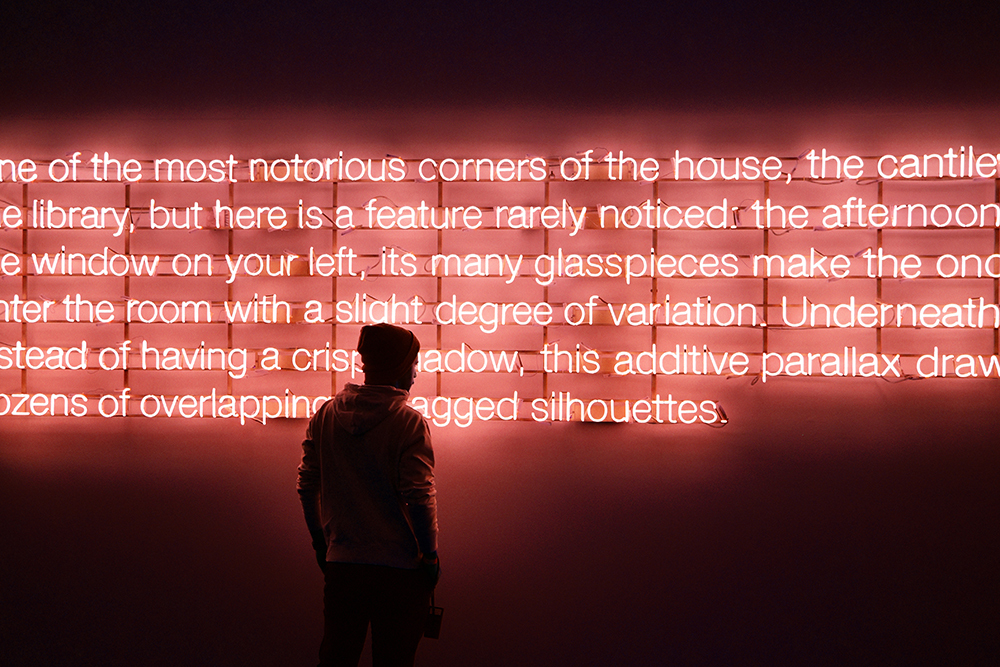

Image attribution: Pedro Nogueira

The Limits of Readability Software

Readability formulas have been controversial throughout their almost century-long evolution, and there are plenty of discrepancies between different formulas.

According to researchers Walter Kintsch and Douglas Vipond, the problem with relying on readability software based on formulas is that formulas have predictive validity only. This means that even if you could unequivocally determine that long words and sentences caused comprehension difficulty, this does not mean that shortening them would remove the difficulty.

This is really important to understand, as many of the readability software tools we use all the time don't just give us readability scores; they give us suggestions for improvement. Some of them require you to implement those suggestions before they give you a green light on readability. Some even prevent the ability to publish without this green light.

The logic here is fundamentally flawed. By binding ourselves to the strict dictates of these software tools, we may even be hurting our content.

And the limits go well beyond how the formulas are implemented in the software to the formulas themselves. Many of the current readability tools available use the earliest formulas for assessing readability. It wasn't until the 1950s that linguistics and cognitive psychology began to play a role in refining how we understand readability to include ideas like interest, motivation, and prior knowledge.

Each of these factors are cognitively very important factors for readability, but they cannot be easily measured (or be measured at all) with text alone. This means that even the best and most scientifically valid readability software tools can only go so far in helping marketers and creatives produce readable content.

"The variables used in the readability formulas show us the skeleton of a text. It is up to us to flesh out that skeleton with tone, content, organization, coherence, and design," says DuBay.

How to Optimize Your Content for Readability (and When to Ignore It)

Know Your Audience

We ought to know our audiences well enough to know what their level of reading comprehension is. If we are speaking to the average American, we should be aiming for a 7th-grade level of ease. This is the optimal average that most readability algorithms target. However, if we are aiming to reach senior-level professionals, their average literacy level will be higher-and by writing at a level below that of our audience, we risk not only losing their interest but sounding condescending by spelling things out too belaboredly.

Compare New Content with Successful Old Content

One of the most accurate ways to know how readable our content is is to compare it with some old content that connected successfully with the same audience. Note the kind of tone, organization, and format of that content along with the vocabulary and sentence length. Compare how well the current content flows from one paragraph to the next compared with the old content. Read them side by side. Consider your level of understanding, affinity, and emotional reaction after reading both pieces.

Hold Focus Groups

Sometimes we just need to present our content and ask other humans what they think. Focus groups are a great way to get at the aspects of readability that software can't measure. Is your target audience motivated to read the content? How does their prior knowledge affect their understanding? You can even conduct your own mini-comprehension tests, asking people questions that relate to the content to see how much of it was understood, retained, and processed in the way you were hoping it would be. This taps into the bigger picture of content optimization. Focus groups can help assess whether our content is achieving our strategic communication goals.

Don't Assume a Better Score Is Better for Your Audience

Sometimes we want to challenge our audience and leave them thinking and puzzling over our content a little longer-a think piece as opposed to a how-to, for instance. In this case, a better readability score isn't necessarily what we're aiming for. For that matter, if you're speaking to a niche audience in a highly technical field, your content may receive lower readability scores by default, but prioritizing readability over the expectations of your audience could threaten your status as a subject matter expert.

Don't Leave It All to Software

In the end, good readability-which is to say, the right level of readability for your audience, not the best readability scores-can only be perfected with human oversight. We need to remember not to let software have the final say, and not to rely on its scoring and suggesting alone. Ultimately, you know your brand, your tone, your approach, and your audience best. Trust your expertise.

Readability software tools may come with nearly a decade's worth of research and refinement, but they are guides, not rules. We should use them to see whether we're in the right ballpark, and then let our talented teams of humans take the reins.

For more insights into content optimization, subscribe to the Content Standard newsletter.

Featured image attribution: Ben White