Content Creation

The Risks and Rewards of Using Generative AI for Content Creation: What Brand Marketers Need to Know

By Casey Nobile on February 2, 2023

If you don't have 10 minutes to read this in its entirety, here's the TL;DR:

Generative AI has progressed to the point of generating content with enough proficiency to rival human creators. Despite these advancements, marketers should be aware of the risks and limitations that come with generative AI before they dive into using it for content creation. Its penchant for fabricating quotes, presenting unreliable facts, and generating unoriginal content void of expert-level insights are all factors to consider.

ChatGPT's public release has caused interest in AI-generated content to skyrocket, but it's important to note that leading media publishers have utilized automated reporting for years now, which provides some insight into initial use cases and public reactions to the technology.

We can anticipate that as this technology advances and becomes more accessible, more AI-generated content will flood the market, making it increasingly difficult for marketers to compete for digital visibility.

However, as we've seen with the rise and subsequent erosion of paid media efficacy, those who become overly dependent on AI-generated content could easily find themselves at a significant disadvantage when detection algorithms, blocking tools, and data-usage regulations catch up to rebalance the scale in favor of consumers' demand for authentic, high-quality content.

For me, this whole debate only underscores the longstanding fact that there aren't really shortcuts to creating top-tier marketing content. Leading the market requires market-leading content, which includes original thinking, unique value, and help above and beyond what buyers ask for and competitors offer. AI will be essential to accelerating the creation and delivery of high-quality content, but it's not the solution in itself.

The aim of this article is to provide marketers with the information needed to make educated decisions when it comes to utilizing generative AI, outlining the benefits and drawbacks of generative AI, particularly when it comes to brand content creation.

Before we dive into the details, let's define some key terms.

Generative AI is a subset of artificial intelligence. It's a type of machine learning that involves programming algorithms to 'learn' from existing content and apply those learnings to the autonomous generation of 'new' content (images, text, music, etc.).

ChatGPT is a chatbot application developed by OpenAI that uses generative AI to interpret user prompts and respond to them with human-like fluency.

GPT-3 (Generative Pre-trained Transformer 3) is the generative AI model that ChatGPT uses. It was trained to specialize in generating human-like text in response to a text prompt, such as a question, command for information, or statement.

DALL-E (Deep Algorithmic Learning Library - Experimental) is another generative AI model developed by OpenAI that specializes in generating images based on text prompts.

What's the buzz around ChatGPT?

OpenAI triggered a media frenzy when it opened its ChatGPT interface for the public to engage with. The fact that the chatbot can respond to a broad range of questions and commands with human-like fluency and coherence sparked a flood of interest in the potential applications of GPT-3 and similar AI models.

Public 'testing' of ChatGPT and its sister product, DALL-E, has also exposed some of the significant limitations and legal implications associated with generative AI models, some of which have been incorporated into assistive tools for creators for years.

A central question within the content marketing industry: Is generative AI good enough to take on assignments and create content as well and efficiently as humans? Specifically under debate is whether or not generative AI models like those used in ChatGPT and DALL-E will replace human content creators entirely. The short answer: we're just not there yet.

Use of automated content in media

As mentioned above, for over a decade, large media companies have taken advantage of generative AI—both homegrown and third-party-provided—to handle rote reporting tasks. Some examples include:

- The Associated Press and Bloomberg using AI to generate articles on company earnings reports and sports coverage.

- The Washington Post and The Guardian, Australia using AI to generate local sports event coverage and short reports and alerts on election and Olympic games outcomes.

- The Los Angeles Times using AI to report on earthquakes and other natural disasters.

- Forbes using AI to support writers with rough drafts and story templates.

The key benefit automated reporting provides in these cases is scale. With AI, these companies have been able to generate more articles (thousands more, as reported in the case of Bloomberg) and more clicks than they could have achieved otherwise.

The applications mainly involve synthesizing standardized data into standardized templates: corporate earnings summaries, game scores, natural disaster stats, etc., boosting the quantity and the speed of news output without compromising the quality and integrity of the publications' more in-depth journalism.

AI has (mostly) proven its mettle in these types of narrow content creation applications, where data and event summarization—rather than art or opinion—is enough to satisfy what readers are looking for.

CNET is a recent exception and cautionary tale. Their in-house AI model made mistakes that slipped past the copy desk, such as transposing numbers, misspelling company names, and plagiarizing without proper citation when synthesizing financial news. As a result, competitors put the company on blast, and, arguably, its reputation has suffered.

Use among media publishers has demonstrated that editorial oversight is essential when it comes to AI-generated content, no matter how basic the content assignment is. And journalistic best practice is to cite AI's contribution in bylines to remain ethically transparent.

Understanding generative AI's limitations

We've now reached a new tier of possibility with generative models like GPT-3, whose advanced processing and training power allow it to adapt to a much broader range of prompts and content creation use cases than its robot reporter predecessors could manage.

However, generative AI models have fundamental limitations that prevent them from serving as a total replacement for the quality, expertise, and originality that human creators can bring to the content creation process. Here are a few reasons why:

-

They will make up facts and present them with confidence and competence. Especially in highly-regulated industries such as finance and healthcare, even the inadvertent spreading of misinformation through the negligent use of automated content creation can result in public censures and significant fines from regulatory bodies.

-

They do not cite sources or provide information about the reliability of their assertions.

-

If they are not ingesting and learning from data in real-time, they will not be able to interpret or incorporate awareness of current events.

-

Large language models can reinforce bias, prejudice, and misinformation because of the inherent bias and inaccuracy of information within the data they were trained on (i.e., the internet—'nough said).

-

It cannot be relied on for predictions, advice, or recommendations, because its algorithm cannot apply critical thinking, risk evaluation, and real-life experience to these activities. Predictive AI models exist but are an entirely different area of machine learning.

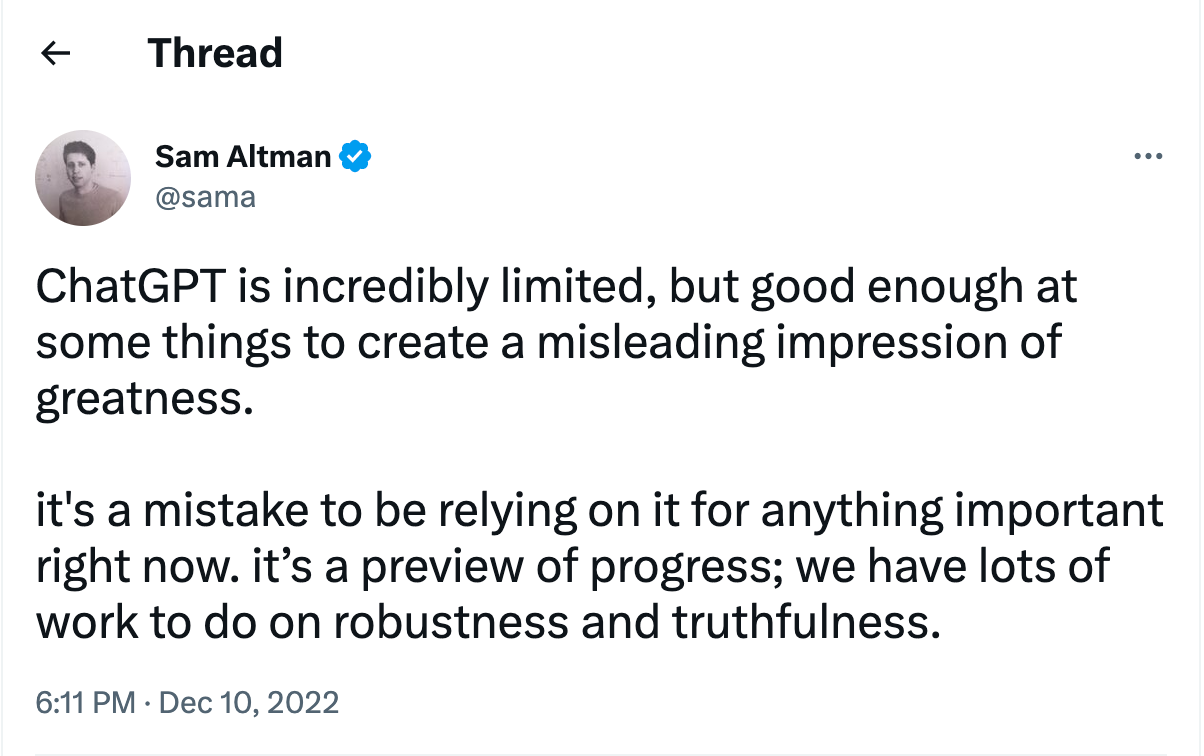

OpenAI's founder, Sam Altman, has openly admitted to many of these risks on Twitter:

Obviously, all these limitations introduce significant reputational risk if generative AI is used to generate thought leadership, advice-driven, or consultative content—which is really the lifeblood of brand content marketing.

These limitations also decrease efficiency, given that any time AI is used to create substantive content from scratch, vigilant human brand oversight, editing, and fact-checking are essential.

The bottom line here: Generative AI is trained to synthesize information and mimic written human interaction, meaning that it's really good at seeming to apply critical thought and regulate itself, but it's not actually capable of it.

So, how can marketers benefit from generative AI?

The key is to think about generative AI as a content enablement tool rather than a content creator in itself. As a company that specializes in content creation, Skyword is already actively using and exploring generative AI in the following areas:

Content planning:

Generative AI can analyze text from source material, such as articles, books, and even conversations, to identify relevant themes and topics. The collected data can then be used to build a framework for the idea and suggest possible directions for development.

Generating ideas and topics:

For example: taking an interview transcript and generating a list of topics to explore in content based on the interview.

Generating content assignments:

For example: taking an identified topic and generating an outline of the subtopics or points to address in a piece of content on the subject.

Creator enablement:

Generative AI's ability to synthesize information and interpret style prompts is a powerful tool to support humans in organizing unstructured ideas and concepts into meaningful text, generating and iterating drafts quickly, and ensuring the final copy is grammatically correct and fluid.

Generating a rough draft:

For Example: Taking writer notes, source content, or a topic prompt and using AI to generate sentences that can be used as the foundation for an article. The generated text can then be edited and revised to create a more polished piece. Be aware that without skilled human prompting and polishing, the substance of the initial draft will be relatively generic.

'Cleaning up' and 'punching up' copy:

For example: Taking existing copy and asking AI to improve it by suggesting synonyms, rewording phrases, and offering alternate phrasing options.

Scaling output:

Large language models' understanding of content formats, ability to interpret persona prompts, and skill at mimicking corresponding writing styles means that it can help quickly reformat content for cross-channel amplification and generate 'new' content options for narrow copywriting tasks.

Personalization

For example: Taking a piece of content and using AI to incorporate specific language or topic considerations relevant to a particular audience type.

Iterative assets:

For example: Prompting AI to generate a tweet to promote an article or summarize the content and key takeaways of a whitepaper for the download landing page.

Copywriting for promotions, ads, and CTAs:

For Example: Asking AI to read a specific piece of text or a combination of text and data and, from there, generate ad copy, promotional copy, or CTAs suggestions. This is not necessarily a novel application, as similar slogan generators and copywriting tools have existed for a while. Models like GPT-3 are just better at it and easier to 'tune' with complex prompts.

Optimizing or refreshing content:

For example: Taking an existing article and using AI to incorporate specific keywords or facts (that you provide) and/or prompting it to revise the language to be more effective in terms of readability, engagement, and conversion.

Image selection and generation:

For example: Taking an article and using AI to select an image or images from a specific database (including proper attribution) to associate with the copy. Be aware that the data and methodology used to train AI image generators have prompted several lawsuits and raised enough ethical questions to warrant extreme caution in pursuing such models.

How Skyword is applying generative AI today

Our content marketing platform, Skyword360, now includes Content Atomization, a direct application of GPT-3 technology. Layering AI with proprietary prompt architecture, we're able to offer our clients the ability to identify a primary piece of content as a source and instantly generate iterative assets (social posts, newsletter summaries, shorter articles, video storyboards, etc.) based on the information in the source content, adapting the style and context for different personas and specific brand tones in the process.

That content is then served up for human editorial review, which, as mentioned, is an essential step in the content quality assurance process.

Rather than using AI to generate a lot of 'bot' content from scratch, based on what it knows from 'the internet'—we apply its skill to repurpose and adapt the style of original, high-quality, human-generated content so that it can quickly be amplified, atomized and used across more channels to target multiple personas.

We see this as just one of many ideal ways to marry the power of human creativity with the efficiency of scale that generative AI can skillfully provide.

Future outlook

Likely impact on search engines:

For now, generative AI has yet to prove reliable and discerning enough to replace the entire answer-fetching and research function that search engines provide today.

So, the more immediate issue marketers face is who stands to gain in search as more AI-generated content enters the digital landscape.

Content farms and companies that spend their energy creating content to game search engine algorithms will likely be among the first to start churning out AI-generated content to boost their site visibility. With no malicious intent, small businesses are also interested in turning to the technology to generate content that, otherwise, they simply couldn't afford to support.

As we know, volume plays a critical role in making search gains, and the quality of content generated by AI, like GPT-3, is at least as good as a lot of keyword-stuffed SEO content already out there. However, any gains made by flooding the market with purely automated content are likely to be short-lived as AI-generated content detection becomes more advanced.

Back in August of 2022, Google (which dominates with ~84% of the search market share) announced its Helpful Content update, specifically designed to take aim at an existing influx of low-value, AI-generated content showing up in search results.

In a nutshell, Google is officially out to detect and favor trustworthy, relevant, and uniquely informative content. Brands whose AI-generated content is engineered to win in search but lacks substance will continue to see their content drop in rankings. On the flip side, developing a consistent base of high-quality, original content will continue to help brands maintain a competitive edge against other sites.

Similarly, the big money already being poured into fact-checking technology designed to identify and crack down on misinformation and misleading content will undoubtedly overlap with an emerging market of AI-generated content detection tools.

Likely impact on the creator ecosystem:

Having spent the early part of my career covering the robotics industry, I'm sensitive to attempts to boil all this down to a ChatGPT vs. Human Creators debate. As we've seen throughout history with the evolution of technology, it's rarely an either/or proposition.

Generative AI and human creators will co-exist, but the way creators work and the career paths available to them will likely change significantly with the advent of this technology. We will explore this topic more deeply in a future post.

For now, What can brands expect in terms of how they engage with, compensate, and what they can expect from creators in the near future?

It's reasonable to expect that—for certain rote content assignments, like writing promo copy or newsletter summaries—generative AI plus editorial oversight will become as effective and more efficient than employing human creators.

However, expert human creators add irreplaceable value to content when they apply their specialized knowledge and expertise to an assignment. I'm talking about developing unique patterns and insights, providing deep reflection and investigation into complex topics, uncovering as-yet-unknown facts, delivering highly-relevant advice, and integrating authentic personal experiences into content.

In the short term, brands are likely to see costs for 'generic' content come down as AI is leveraged to increase the cheap supply of highly-templated content types and as more human creators begin to use AI to generate content more quickly.

On the other hand, we're likely to see rates among highly-skilled creators and industry experts increase as demand for their skills grows among brands that must rely more on quality and originality to differentiate themselves within an even noisier content landscape.

As to whether or not marketers should be concerned about paying creators who turn in assignments written by AI, it's important to recognize that AI-assistive tools have—in more basic iterations—been leveraged by creators for some time now. At the end of the day, it takes time and skill to prompt AI into generating content that feels creative, insightful, and compellingly unique. Whether or not AI was used doesn't matter so much as whether or not the output is uniquely informative, well-crafted, and trustworthy.

Lean into editorial teams and plagiarism detection tools to judge whether or not content that's turned in meets your brand's standards for quality, topic expertise, and originality, as that is the evidence of real human effort being applied. Specific AI-generated content detection tools are being developed but cannot (yet) reliably determine the level of human vs. machine effort that went into a piece—if that's your goal.

Likely impact on customer behavior

This is the question that, as a marketer, I'm most concerned with: What happens to customer trust once AI-generated content goes even more mainstream? Our CEO will dive into this in his next newsletter but—judging by historical patterns—three behaviors are likely to be impacted by the broader use and accessibility of generative AI:

-

Buyers' trust in brands and brand marketing will erode as browsers and other platforms build in tools to detect and warn buyers that something was created by AI, and brands' use or non-use of AI-generated content becomes a point of competitive differentiation.

-

Buyers will expect even more tailoring, personalization, and immersive experiences from brands as they engage with more AI-powered experiences in their everyday lives. Brands will compete even more on experiential quality and hyper-relevance as impatience with manual 'research' grows.

-

Buyers will put even more stock in authentic human recommendations, stories, and customer video reviews when researching products. They may even begin to abandon more traditional digital information platforms as specialized sources of 'verified human' content emerge in response to consumer mistrust.

It will not always be the case, but (for now), generative AI is a quantity play, not a quality play, and brands need both quantity and quality to compete in today's marketing environment. So, by all means, explore and test generative AI as an efficiency and enablement tool for your content creation, but avoid falling into the trap of thinking it can serve as a complete replacement for human content creators.

Brands will have to master this technology (on the content production and brand experience sides of the house) to compete moving forward. So, work with vendors who know the technology, are applying it in the right ways, and who can manage and mitigate any risks for you.

I encourage you to subscribe to our newsletter if you're interested in getting more content in our ongoing series on generative AI delivered straight to your inbox. Book a meeting with our team for an inside look at how we're using generative AI at Skyword to improve our brand clients' content creation efficiency without compromising their quality or brand integrity.